Master thread on the 2015-2022 closure of the Internet [PINNED]

..The process by which every major Internet platform went from broadly open with a few basic guidelines to strict narrative enforcement, often with the collaboration of govts and outsourcing moderation power to NGOs.

YouTube was the most important platform for reaching The Youth and also uniquely compatible with monetization, allowing independent political/intellectual entrepreneurs to make a career.

------------------------------------------------------------------------------------------------

The most important platform to be closed off 2017-2019 was YouTube. Before 2017, YouTube was a very open platform, with easy monetization and almost no moderation of legal content. By the end of 2019, thoughtcrime (anything to the right of Ben Shapiro) was thoroughly purged.

In March 2017, several news organizations (The Times of London, the Guardian, WSJ) published coordinated articles about ads appearing next to "problematic" content on YouTube. This led to the British government summoning Google to explain and an advertiser boycott.

[as an aside, no one sane believes that an ad appearing next to a YouTube video implies the company behind that ad endorses or knows about the content of the video; this was 100% astroturf. No one knew or complained until the news articles hit]

Google then began restricting ads/monetization to large accounts that had been manually reviewed, and greatly expanded the scope of its hate speech policy (for instance, banning insults "associated with marginalization" - no points for guessing who defines that).

YouTube participated in the August 2018 deplatforming of Alex Jones (along with Apple, Facebook, Spotify, LinkedIn, Pinterest, Vimeo, and Twitter), permanently banning from the site. Jones' deplatforming was the start of a slippery slope.

YouTube expanded to more than 10,000 moderators in 2018. More than 90% of removed videos were seen by fewer than ten people.

1/?

YouTube was the most important platform for reaching The Youth and also uniquely compatible with monetization, allowing independent political/intellectual entrepreneurs to make a career.

------------------------------------------------------------------------------------------------

The most important platform to be closed off 2017-2019 was YouTube. Before 2017, YouTube was a very open platform, with easy monetization and almost no moderation of legal content. By the end of 2019, thoughtcrime (anything to the right of Ben Shapiro) was thoroughly purged.

In March 2017, several news organizations (The Times of London, the Guardian, WSJ) published coordinated articles about ads appearing next to "problematic" content on YouTube. This led to the British government summoning Google to explain and an advertiser boycott.

[as an aside, no one sane believes that an ad appearing next to a YouTube video implies the company behind that ad endorses or knows about the content of the video; this was 100% astroturf. No one knew or complained until the news articles hit]

Google then began restricting ads/monetization to large accounts that had been manually reviewed, and greatly expanded the scope of its hate speech policy (for instance, banning insults "associated with marginalization" - no points for guessing who defines that).

YouTube participated in the August 2018 deplatforming of Alex Jones (along with Apple, Facebook, Spotify, LinkedIn, Pinterest, Vimeo, and Twitter), permanently banning from the site. Jones' deplatforming was the start of a slippery slope.

YouTube expanded to more than 10,000 moderators in 2018. More than 90% of removed videos were seen by fewer than ten people.

1/?

Replies:

>>12565

>>12568

>>12571

>>12575

>>12582

>>12585

>>12587

>>12590

>>12592

>>12597

>>12602

>>12604

In August 2018, YouTube also began banning state-sponsored content from Russia and Iran. This is less significant from a freedom-of-thought perspective, but I think sets a hard "no later than" date by which US intelligence agencies were getting involved.

In January 2019, Google began algorithmically suppressing "borderline content" meaning content that did not violate their guidelines but they judged could be harmful. Unlike bans, algorithmic suppression is silent and almost impossible to prove or fight.

By Dec 2018, Google was removing millions of videos per quarter, though mostly being spam or scams (which I think is justified, otherwise the site is unusable). But around 1% were for political reasons, and this number exploded in 2019 when moderation policy was changed.

Some of this zeal (more than 58M videos removed in Q3 2018) was to "demonstrate progress in suppressing problem content" to "government officials and interest groups [read: astroturfed NGOs]" who were (and are - see the UK's OSA) keen to control what is on YouTube.

In June 2019, YouTube demonetized and then banned Steven Crowder for calling a Vox journalist an "anchor baby" and a "lisping queer", which they acknowledged did not violate their policies [also note the Daily Beast calling insults "gay bashing," which they are not].

2/?

In January 2019, Google began algorithmically suppressing "borderline content" meaning content that did not violate their guidelines but they judged could be harmful. Unlike bans, algorithmic suppression is silent and almost impossible to prove or fight.

By Dec 2018, Google was removing millions of videos per quarter, though mostly being spam or scams (which I think is justified, otherwise the site is unusable). But around 1% were for political reasons, and this number exploded in 2019 when moderation policy was changed.

Some of this zeal (more than 58M videos removed in Q3 2018) was to "demonstrate progress in suppressing problem content" to "government officials and interest groups [read: astroturfed NGOs]" who were (and are - see the UK's OSA) keen to control what is on YouTube.

In June 2019, YouTube demonetized and then banned Steven Crowder for calling a Vox journalist an "anchor baby" and a "lisping queer", which they acknowledged did not violate their policies [also note the Daily Beast calling insults "gay bashing," which they are not].

2/?

In Q2 2019, Google began "prohibiting videos alleging group superiority" [no prizes for guessing for which groups this was enforced] and as a consequence ramped up suspensions fivefold, banning more than 17,000 channels and deleting more than 100,00 videos and 500M comments.

Google eventually began banning "malicious insults based on protected attributes" [no points for guessing which attributes were protected or what counted as an insult] in December 2019.

In 2016, there was a thriving alt-center/"anti-woke"/anti-feminist ecosystem on YouTube, often connected to the New Atheists. By 2019, almost every major creator was banned, demonetized, or became leftist to survive, replaced by anarchist/Communist "Breadtube."

Since The Youth are illiterate troglodytes, video content served by algorithmic feed is their major source of information, and until TikTok (which has similar policies), YouTube was by far the largest source of this (by orders of magnitude).

As such, the purging of anti-woke/anti-feminists from YouTube killed any sort of semi-popular intellectual opposition to the Great Awokening under 30. The only site more emblematic of the annihilation of open internet discourse 2017-2019 might be Reddit.

------------------------------------------------------------------------------------------------

Some sources/links:

https://variety.com/2019/digital/news/youtube-hate-speech-ban-channels-videos-removed-1203322079/

https://www.thedailybeast.com/youtube-punishes-steven-crowder-for-homophobic-attacks-on-gay-vox-journalist-carlos-maza/

https://publicknowledge.org/public-knowledge-welcomes-youtube-recommendation-changes-targeting-borderline-content-and-misinformation/

https://www.bankinfosecurity.com/google-youtube-accounts-content-linked-to-iran-a-11415

https://ashinaflash.wordpress.com/2017/08/26/the-youtube-adpocalypse-and-the-modern-journalistic-format/

------------------------------------------------------------------------------------------------

Google eventually began banning "malicious insults based on protected attributes" [no points for guessing which attributes were protected or what counted as an insult] in December 2019.

In 2016, there was a thriving alt-center/"anti-woke"/anti-feminist ecosystem on YouTube, often connected to the New Atheists. By 2019, almost every major creator was banned, demonetized, or became leftist to survive, replaced by anarchist/Communist "Breadtube."

Since The Youth are illiterate troglodytes, video content served by algorithmic feed is their major source of information, and until TikTok (which has similar policies), YouTube was by far the largest source of this (by orders of magnitude).

As such, the purging of anti-woke/anti-feminists from YouTube killed any sort of semi-popular intellectual opposition to the Great Awokening under 30. The only site more emblematic of the annihilation of open internet discourse 2017-2019 might be Reddit.

------------------------------------------------------------------------------------------------

Some sources/links:

https://variety.com/2019/digital/news/youtube-hate-speech-ban-channels-videos-removed-1203322079/

https://www.thedailybeast.com/youtube-punishes-steven-crowder-for-homophobic-attacks-on-gay-vox-journalist-carlos-maza/

https://publicknowledge.org/public-knowledge-welcomes-youtube-recommendation-changes-targeting-borderline-content-and-misinformation/

https://www.bankinfosecurity.com/google-youtube-accounts-content-linked-to-iran-a-11415

https://ashinaflash.wordpress.com/2017/08/26/the-youtube-adpocalypse-and-the-modern-journalistic-format/

------------------------------------------------------------------------------------------------

Reddit was known for its "anything goes" speech policy in 2015, and was the hub for text-based debate between normal people on opposing sides of issues. Turned into a leftist echo-chamber to spite r/TheDonald.

In 2015, Reddit, like YouTube, had almost no content policy beyond banning illegal activity, doxxing, harassment, and involuntary or underage pornography. By 2020, Reddit had purged political dissent from the site.

Much of Reddit's shift was motivated by one thing: that r/The_Donald, the hub of internet Trump support, could consistently reach and dominate the front page. Reddit repeatedly changed their algorithm and policies specifically to suppress r/The_Donald before banning it.

The first major crack in Reddit's freedom of speech stance was in 2016, when the CEO of Reddit, Steve Huffman, was caught personally editing user's posts on r/The_Donald. He then changed Reddit's policy to exclude r/The_Donald from the r/popular Reddit homepage.

After Unite the Right in Charlottesville (October 2017), Reddit announced an expanded content policy against hate speech and banned around 20 subreddits.

attachment::5

1/?

Replies:

>>12566

In March 2018, Reddit began banning the (legal) sale of guns or prostitution in response to the Parkland mass shooting.

In September 2018, Reddit revamped their quarantine system (subreddits became invisible to non-members, killing growth) and began quarantining dozens of subs, including popular ones such as r/TheRedPill, for being "controversial" [ie, some activist wrote an article].

Also in September, Reddit changed its harassment policy from requiring fear for real-world safety to simply "anything that works to shut someone out of the conversation through intimidation or abuse," a much laxer standard. This led to dozens more subs getting purged.

In January 2019, many subreddits were banned for "anti-Muslim content" after the Christchurch shooting, including r/Gore and r/cringeanarchy, neither of which were political.

2/?

Replies:

>>12567

r/The_Donald, the hub of Trump support on the Internet with 754,000 subscribers, was quarantined in June 2019, as was r/frenworld (for using pepe the frog memes as coded antisemitic messaging). r/The_Donald was banned for good in 2020, along with more than 2000 other subs.

In numbers: in 2019 Reddit quarantined 256 subs, banned 21,900 subs, suspended 55,994 accounts for policy (as opposed to eg spam) violations, and removed 222,000 pieces of content for policy violations. Moderators removed 84.1 million pieces of content.

One of the more difficult things to find concrete information on was the role of power mods. By 2020 six volunteer moderators had autocratic control over 118 of the top 500 subs; these individuals tend to be leftist and plausibly more powerful than the actual site.

In 2015, Redditors were almost universally hostile to the idea of censorship or banning "hate speech." By 2018, a significant minority were in favor. By 2020, most dissenters having been banned from the site, a majority were in favor.

Today, Reddit is notorious for its doctrinaire leftism, but it used to be a very ideologically heterogenous site and the main discussion forum between different groups on the Internet. Nothing has successfully replaced 2016 Reddit for actual popular debate.

------------------------------------------------------------------------------------------------

Some sources/links:

https://www.engadget.com/2017-10-25-reddit-bans-more-racist-communites.html

https://www.socialmediatoday.com/news/reddit-releases-2019-transparency-report/572899/

https://en.wikipedia.org/wiki/R/The_Donald

https://www.researchgate.net/publication/341583849_Gaming_Reddit's_Algorithm_rthe_donald_Amplification_and_the_Rhetoric_of_Sorting

https://www.deccanchronicle.com/technology/in-other-news/170319/reddit-acts-against-hate-channels-following-new-zealand-mosque-attack.html

https://www.digitaltrends.com/social-media/reddit-rules-harassment-bullying-update/

https://www.newsweek.com/reddit-quarantine-subs-toxic-controversial-moderators-1144663

https://www.vice.com/en/article/reddit-bans-subreddits-dark-web-drug-markets-and-guns/

https://gizmodo.com/reddits-next-wave-of-community-bans-starts-today-1819849813

https://hal.science/hal-03609930v1/file/Reddit_hatespeech.pdf

https://www.reddit.com/media?url=https%3A%2F%2Fi.redd.it%2Fpyv0sdl2o0z41.png

------------------------------------------------------------------------------------------------

>Cannot Post Reply

>Board hourly limit reached

That's going to slow me down :(

Amazon, with its dominance of the e-book and e-commerce markets, played a similar political commissar role for aspiring authors and merch sellers. And like other major tech giants, had little content policy beyond "no illegal content, spam or scams/fraud" in 2015 and by 2020 had a well developed censorship infrastructure for both the web store and AWS.

Amazon is particularly important for two reasons: (1) AWS making it, like Google Search, a major Internet chokepoint and (2) 50% book and 80% e-book market share; Amazon banning a book is the closest a non-classified book can really come to being banned in the US.

The first cracks in Amazon's neutrality appeared in June 2015, when a media blitz and political pressure campaign (sparked by Dylan Roof) led to Amazon removing all Confederate flag (a completely normal American symbol) merchandise from the site.

By August 2018, Amazon was banning "items that Amazon deems offensive," in this case Nazi-themed merchandise. Note the direct intervention of a Democratic lawmaker (Keith Ellison) - this was not a purely private endeavor.

Again in response to Congress, Amazon removed Proud Boys, a civic nationalist patriotic group that semi-regularly fought antifa, memorabilia in 2018 (they would later do the same to QAnon). Needless to say, antifa merch was not pulled.

After a threatening letter from nine Democratic lawmakers in 2019, Amazon banned most gun accessories, parts, and ammunition from the site, including basic things like slings and rails.

By 2019, Amazon was accustomed to taking listings down in response to news articles [not "online outrage", important distinction], such as innocuous (really) Auschwitz Christmas ornaments (it was part of a series of ornaments themed around Polish cities).

1/?

Replies:

>>12569

Mein Kampf was removed in 2020. Needless to say "Quotations from Chairman Mao" and "The Wretched of the Earth" were not.

By 2021, even fairly academic and tame criticism of the transgender movement [which barely existed 10 years prior], "When Harry Became Sally" could be banned from Amazon and thus cut off from any mass audience.

Amazon started going after non-illegal (they kicked wikileaks off in 2010 because wikileaks was illegal) sites using AWS in 2019, by threatening another host provider, Epik, for providing services to 8Chan (which was not hosted on AWS directly).

They did the same with Gab, another right-wing Twitter alternative (which was also banned by Microsoft Azure).

Replies:

>>12570

Amazon Web Services was politicized dramatically in 2021 when it kicked Parler, an alternative to Twitter that gained popularity when Trump was banned, from the platform, destroying the app (which was simultaneously banned by both Apple and Google from their app stores).

Not being a social media site, Amazon's moderation/content removal/censorship apparatus is much less noticeable than YouTube or Reddit or Twitter or Facebook, but it was built around the same time (2015-2019) and performed (and performs) similar functions.

I should mention that I have not touched COVID/lockdown related removals.

Subjectively, one of the big differences between Amazon and Google/YouTube/Reddit was the importance of US Congress; direct threats from Democratic lawmakers precipitated several major steps on the censorship ladder, whereas the EU and UK were more important for the others.

------------------------------------------------------------------------------------------------

Some sources/links:

https://geekwire.com/2019/amazon-seeks-root-ties-8chan-tech-firms-grapple-implications-extremist-sites/

https://techcrunch.com/2021/01/09/amazon-web-services-gives-parler-24-hour-notice-that-it-will-suspend-services-to-the-company/

https://ncac.org/news/amazon-book-removal

https://thenation.com/article/economy/throwing-the-book-at-amazons-monopoly-hold-on-publishing/

https://buzzfeednews.com/article/leticiamiranda/amazon-removed-proud-boys-merchandise

https://abcnews.com/Technology/amazon-pulls-auschwitz-death-camp-ornaments-online-outrage/story?id=67431874

https://web.archive.org/web/20201014180015/https://www.menendez.senate.gov/newsroom/press/menendez-leads-message-to-big-tech-close-your-gun-shopping-loopholes

https://scotsman.com/read-this/amazon-is-banning-the-sale-of-hitlers-book-95-years-after-publication-heres-why-2505935

https://time.com/3932645/amazon-confederate-flags/

------------------------------------------------------------------------------------------------

Twitter, which dominated among cultural elites (journos, academics, politicians) went from "the free speech wing of the free speech party" to an extension of a partisan FBI with many different tiers of algorithmic manipulation for disfavored stories.

In 2015, Twitter was "the free speech wing of the free speech party" according to CEO Jack Dorsey, even avoiding collaboration with the NSA (unlike Google, Facebook). By 2019 it was one of the most censored, monitored, and controlled social media networks in the world.

YouTube was the biggest and most monetizable platform, Reddit the most important discussion forum, Amazon needed for authors and websites, and Google Search (We will touch on this later) the only way to surface niche info sources. Twitter mattered as the social network of the intelligentsia.

In 2015, Twitter under Twitter general counsel Vijaya Gadde began reinterpreting their existing rules much more broadly and banned hate speech, to "keep Twitter safe." Chuck Johnson was banned for tweeting that would "take out" (attack digitally, not murder) a BLM activist.

Twitter's informal stance had already started changing, but the big formal changes to the rules began in 2016, when it no longer promised not to censor user content that didn't break the rules ("limited circumstances described below").

In February 2016, Twitter established its (in)famous "Trust and Safety" council, a body which networked censorship/moderation/activist expertise around the world to inform Twitter policy.

1/?

Replies:

>>12573

In 2017, Twitter formally moved against "hate symbols" and "unwanted sexual advances." The blue check aristocracy began to take form too, with Rose McGowan's followers impelling this change (on her behalf) after she was temporarily locked for posting someone's private number.

In 2018, Twitter banned "misgendering" transsexuals, and laid out which groups got special protection ("women, people of color, lesbian, gay, bisexual, transgender, queer, intersex, asexual individuals, and marginalized and historically underrepresented communities").

Twitter also began shadowbanning prominent Republican politicians in 2018, though they later claimed this was a bug and backtracked when Republicans attacked them for it.

High profile bans included Milo Yiannopoulos (338,000 followers, July 2016), major Trump advisor Roger Stone (October 2017), Gavin McInnes and the Proud Boys (August 2018), Alex Jones (coordinated with other platforms, Sept 2018), Laura Loomer (265,000 followers, Nov 2018).

2/?

>Maximum registrations reached for today

Replies:

>>12574

In the first half of 2018, Twitter actioned 250,806 accounts for hateful conduct. This increased by 54% by the second half of 2019.

Some revelations from Elon's Twitter files:

1) The FBI had a dedicated task force of 80 agents and a one-way communication channel called teleporter to flag posts, even joke posts from tiny accounts

2) All of the conspiracies about shadowbans were totally vindicated; Twitter had separate lists for Trends blacklists, Search blacklists, and "Do Not Amplify" (which included Charlie Kirk), with multiple levels of visibility filtering and internal bodies to administer them

I have deliberately avoided getting into the 2020-2022 intensification of censorship/narrative control (eg putting notes on Trump's posts and banning him, Hunter Biden's laptop, everything related to COVID, lab leak, and the lockdowns).

------------------------------------------------------------------------------------------------

Some sources/links:

https://stoppingsocialism.com/2023/01/the-twitter-files-comprehensive-summary-analysis-and-discussion-of-ramifications-for-american-institutions/

https://techcrunch.com/2020/08/19/twitter-claims-increased-enforcement-of-hate-speech-and-abuse-policies-in-last-half-of-2019/

https://committees.parliament.uk/writtenevidence/103266/html

https://nbcnews.com/tech/tech-news/twitter-permanently-bans-conspiracy-theorist-alex-jones-website-infowars-n907261

https://cbsnews.com/news/proud-boys-gavin-mcinnes-twitter-suspension-today-unite-the-right-2018-08-10/

https://cnn.com/2017/10/29/politics/roger-stone-twitter/index.html

https://vice.com/en/article/twitter-appears-to-have-fixed-search-problems-that-lowered-visibility-of-gop-lawmakers/

https://web.archive.org/web/20250108124457/https://www.vice.com/en/article/twitter-is-shadow-banning-prominent-republicans-like-the-rnc-chair-and-trump-jrs-spokesman/

https://cnn.com/2023/04/19/tech/twitter-hateful-conduct-policy-transgender-protections/index.html

https://medianama.com/2017/10/223-twitter-revises-online-harassment-policy/

https://npr.org/2022/12/12/1142399312/twitter-trust-and-safety-council-elon-musk

https://vice.com/en/article/the-history-of-twitters-rules/

https://dailydeclaration.org.au/2023/01/09/helpful-summary-of-the-twitter-files-so-far-with-matt-taibbi/

------------------------------------------------------------------------------------------------

Replies:

>>12578

Facebook's closure was closely linked to German, EU, and British pressure after it was (mostly wrongly) blamed for opposition to the 2015 migrant crisis and Brexit. Significant because Facebook allowed the Internet to reach the Great Boomer Voter Mass.

The most interesting thing in Facebook's evolution from mostly-free (albeit without pseudonymity) platform to aggressively controlled between 2015 and 2020 is how involved European governments were in the process (thread).

The most important thing about Facebook is that it can be used to reach the great mass of Gen X and Boomer adults who are not Internet natives and comprise most swing voters. Dark Facebook Manipulation from Russia and Vote Leave was blamed for both Trump 2016 and Brexit.

These two events provoked an avalanche of books, news articles, government reports, NGOs, and hearings (including in 2018 in the US Senate) about how Facebook microtargeting would end democracy. Example:

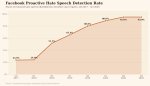

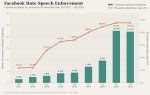

Facebook did not change their formal policies as much as Twitter/Reddit/YouTube, transformed how the platform surfaced and moderated political content. Facebook began reporting their "hate speech" moderation metrics in Q4 2017; in three years it was nearly erased from the site.

The amount of content actioned for hate speech steadily rose 2017-2020, exploding to more than 22 million posts in Q2 2020 when the lockdowns (which intensified Internet closure across all platforms) began.

Facebook hired an additional 3000 moderators in 2017.

In 2017, Facebook began flagging 'misinformation'; by 2019 this had been outsourced to third party fact-checkers (who, of course, were not ideologically neutral), who gained the ability to flag, deboost, and eventually remove posts at will.

<second attachment in next post>

1/?

Replies:

>>12576

The German government's German Network Enforcement Act (NetzDG) imposed large fines for illegal (incl political) content. Since there were no fines for over-enforcement, this encouraged Facebook to aggressively censor. Officially-recognized journalists were exempt.

One of the privileges of the Fourth Estate of our present ancien regime is freedom of speech - the ability to avoid social media censorship.

As with YouTube, the British government was extremely important in the closure of Facebook. The Brexit referendum was blamed on a (hallucinated) microtargeting narrative (Cambridge Analytica), which was then used to justify a then-novel suite of legal speech controls.

Dominic Cummings, who ran Vote Leave and wrote down what he was doing and how he did it both before and after the referendum, pointed out that this "dark adverts" narrative is nonsense from journos who don't understand A/B testing or focus groups are.

https://dominiccummings.com/2018/05/18/on-the-referendum-24h-facebook-data-science-technology-elections-and-transparency/

------------------------------------------------------------------------------------------------

Some sources/links:

annenbergpublicpolicycenter.org/wp-content/uploads/NetzDG_TWG_Tworek_April_2019.pdf

https://gov.uk/government/news/uk-to-introduce-world-first-online-safety-laws

------------------------------------------------------------------------------------------------

Subplot:

An admin for one of the biggest right-wing Facebook groups had a DM session with his impressions/experience with Facebook moderation and censorship. RW Facebook was big in 2016/17.

The big crackdown began in summer 2017; it did not take the form of bans for hate speech but rather all publicly-known admin accounts getting suspended for no reason, leading to the pages disappearing.

This included device bans which permanently destroyed most of the pages.

The admin purges with no justification were more effective than the page deletions, in part because normie conservatives rallied against censorship but couldn't really grasp or understand the implications of extremely opaque account removals.

The interesting thing is that the non-public admins did not get taken down, even though they would've been easily visible from inside Facebook, which implies that the crackdown was using lists of accounts compiled by outside groups (probably academics).

<second attachment in next post>

Replies:

>>12579

1/?

He pointed me to this sophisticated and accurate network analysis from German academia as an example of what the outside sources behind the crackdown could possibly have been.

In addition to the mass account banning, Facebook also used shadowbanning, cutting my source's reach by over 99%. According to him, both the shadowbans and account purges stopped the instant Biden was inaugurated in 2021, though he no longer talks about politics.

On the state of the shadowbans. A page with >40K followers and no official restrictions getting literally 1-2 engagements per post, with this suddenly changing post-Biden's inauguration.

<second attachment in next post>

Replies:

>>12580

2/?

Facebook had several major advantages over RW X/Twitter: it was more personal, slower-paced and hence more careful and less sloppy, had better group functions (and hence more privacy) and better vetting. They heyday of RW Facebook lasted ~1-1.5 years.

My source believes the suppression of RW Facebook, with its relatively higher standards, paved the way for the current degenerate grifter dominance on the e-Right, as the purges destroyed any sort of internal group hygiene/emergent standards.

Author's view on the state of e-right discourse: much of it is bad because even the more intellectually-minded tend to have little general knowledge and base everything off a small number of books (I concur, people should read more mainstream history for a decent fact base).

In my source's judgement: Facebook's censorship campaign came from govt (esp EU)/NGO/academic pressure, not antifa and not within Facebook itself either (hence missing private admins). All vanished as soon as Biden was in office.

Replies:

>>12581

Source confirms my belief that the chilling effect of censorship (though unmeasurable) is much bigger than the direct effect.

This happened right *before* Unite The Right/Charlottesville, which meant that my source and some of his Facebook friends were unable to warn against attendance.

------------------------------------------------------------------------------------------------

Source of network analysis: https://oilab.eu/from-vaporwave

------------------------------------------------------------------------------------------------

Apple's role was more through intimidation/chilling effects than direct censorship; there were only a few removals but given Apple mobile dominance they had a big effect.

From 2016 to 2023, Apple's App Store, half the mobile duopoly, went from a curated software marketplace to one of the most important content control systems on Earth.

In June 2016, Apple completely reorganized their App Store Review Guidelines into five pillars: Safety, Performance, Business, Design, and Legal.

Most of Apple's big decisions were not policy ones but specific removals that had a chilling effect on future discourse. In Aug 2018, Apple removed 5/6 Alex Jones podcasts for hate speech. This was done jointly with similar actions from Facebook, YouTube, and Spotify.

One demonstration of Apple's market power and the effect of their content control apparatus was their treatment of Tumblr. Apple removed Tumblr from the app store in 2018 over CSAM, which forced Tumblr to ban all NSFW content, which led to a 33% drop in users.

Apple also removed all vaping-related apps from their store Nov 2019.

Apple was one of the biggest players (along with Amazon and Google) in the destruction of Twitter alternative Parler in 2021 (banning them from the app store), explicitly because Parler's moderation practices were not to their liking.

>>1258

Gab, another Twitter alternative, was never granted app store access in the first place, first being rejected in Dec 2016.

Apple has been extremely accommodating with the Chinese government's demands for data access and content control, banning VPN apps in China in 2017 and giving the Chinese government unrestricted access to Chinese user's iCloud data.

Apple also banned an app used by Hong Kong protestors in 2019 and removed the Taiwanese flag emoji at the CCP's request. Approximately 3200 apps are missing from the Chinese app store, roughly 1/3 of which are for political reasons.

<third attachment in next message>

Gab, another Twitter alternative, was never granted app store access in the first place, first being rejected in Dec 2016.

Apple has been extremely accommodating with the Chinese government's demands for data access and content control, banning VPN apps in China in 2017 and giving the Chinese government unrestricted access to Chinese user's iCloud data.

Apple also banned an app used by Hong Kong protestors in 2019 and removed the Taiwanese flag emoji at the CCP's request. Approximately 3200 apps are missing from the Chinese app store, roughly 1/3 of which are for political reasons.

<third attachment in next message>

Replies:

>>12584

Apple also did things like limit airdropping in China during the anti-lockdown protests in 2022 and banning Bible and Quran apps in China.

In the ~1989-2008 US debate wrt China, China bulls like Bill Clinton claimed that market access would make Chinese values more like the US, but the reverse happened, because the PRC learned to use its market power and supplier monopoly to coerce Western entities.

Tim Cook, to the ADL: "our values drive our curation systems" and "tech companies must stand by their values and remove content that promotes hate and white supremacy."

------------------------------------------------------------------------------------------------

Some sources/links:

cnbc.com/2018/08/06/apple-pulls-alex-jones-infowars-podcasts-for-hate-speech.html

https://cnbc.com/2018/12/04/apple-ceo-tim-cook-says-hate-has-no-place-on-tech-platforms-at-adl.html

https://cnbc.com/2022/11/30/apple-limited-a-crucial-airdrop-function-in-china-just-weeks-before-protests.html

https://fortune.com/2021/10/15/apple-china-censorship-religious-apps-quran-bible/

https://techtransparencyproject.org/articles/apple-censoring-its-app-store-china

https://expressvpn.com/blog/china-ios-app-store-removes-vpns/

https://thehackernews.com/2018/07/apple-china-icloud-data.html

https://newsweek.com/hkmap-apple-ios-mobile-pulled-app-store-hong-kong-protests-china-1464318

https://qz.com/1723334/apple-removes-taiwan-flag-emoji-in-hong-kong-macau-in-ios-13-1-1

https://inc.com/salvador-rodriguez/gab-apple-inauguration.html

https://techcrunch.com/2021/01/08/parler-removed-from-google-play-store-as-apple-app-store-suspension-reportedly-looms/

https://qz.com/1751120/apples-vaping-app-ban-wont-hurt-many-non-cannabis-vapers

https://en.wikipedia.org/wiki/Tumblr

https://www.appstorereviewguidelineshistory.com/articles/2016-06-13-totally-rewritten-wwdc/

------------------------------------------------------------------------------------------------

You might say "it's OK, it's the Internet, even if you're kicked off the major platforms you can make your own website/forum and people can find you"... except Google also changed their search algorithm to avoid non-mainstream sites and sources. Try finding Cyberix on Google right now.

It is stunning how quickly the Internet was closed off 2017-2023. Perhaps most importantly, Google began politicizing search results in April 2017 with "Project Owl," which sought to suppress "problematic searches" [their term, not mine].

To accomplish this, Google began removing "problematic" autocomplete selections. Since then, there have been a number of cases of Google's autocomplete bias getting so heavy-handed it went viral on other platforms, but the thumb on the scale is usually invisible.

Google also began manual rating/curation of their "featured snippets" answers.

Google also began boosting, in their own words, "authoritative" content in search results. This meant more mainstream news articles, Wikipedia, government pages, Reddit, and NGO reports, and fewer blogs, forums, and independent writing that might be a better match.

Project Owl was one of the prototypes for a wave of similar measures at other platforms. The playbook looked something like (1) find something "problematic" [eg Holocaust denial] on platform that no one would associate with the company itself [in this case of Google, less than 0.25% of results] (2) AstroTurf media campaign "criticizing" the company (sometimes with ad boycott thrown in) (3) sympathetic prog insiders at company work to close down platform, usually using mainstream media or disinformation NGOs as epistemic authorities (and sometimes three letter agencies).

This is separate from YouTube, Gmail, or more recently Gemini, all of which have had similar campaigns. Google is probably the most powerful company in the world.

------------------------------------------------------------------------------------------------

Source of images:

https://searchengineland.com/googles-project-owl-attack-fake-news-273700

------------------------------------------------------------------------------------------------

Replies:

>>12587

..and if you can survive getting booted off every major platform and Google search, your cloud providers/payment processors/DDoS protection/ISPs/domain registrars might coordinate to nuke you off the Internet anyways.

Deplatforming of websites time! This is when private web infrastructure actors (cloud providers, payment processors, DNS providers, certificate authorities, DDoS protectors) coordinate to purge websites. Several layers of the web stack are oligopolies, so this can happen sans explicit coordination.

The first major case of infrastructure-level deplatforming was WikiLeaks in 2010, for releasing classified information obtained illegally (US diplomatic cables), referred to as "Cablegate," by AWS, EveryDNS, PayPal, Visa, Mastercard, and Bank of America.

The attacks on WikiLeaks were not ideological ones (they were committing actual crimes as a website), but the tactics (both deplatforming and extremely bogus sex "scandals" like sending love letters to a 19 year old), presaged later efforts during the Great Awokening.

The first major ideological deplatforming was that of the Daily Stormer in 2017. First GoDaddy, then Google Domains, then several different countries ((.ru), (.al),(.at)(.is)(.cat)) booted them off of their domain. Then Sendgrid and Zoho terminated email and SaaS services.

The coup de grace was Cloudflare terminating DDoS protections, which effectively terminated the site. The entire deplatforming process took four days (GoDaddy terminated their domain Aug 13, Cloudflare acted on Aug 16).

Unlike WikiLeaks, the Daily Stormer was not doing anything illegal. But they were an explicitly Neo-Nazi website and so attracted little defense, and none at all from lawmakers. Unsurprisingly, then, this tactic quickly escalated to more benign sites.

Gab is a Twitter alternative. It is a right-wing site, but more BlueSky than Daily Stormer. In Oct 2017, GoDaddy stopped registering the domain, Joyent, their hosting provider, terminated them, PayPal and Stripe blocked payments, and BackBlaze terminated cloud storage.

1/?

Replies:

>>12588

8Chan, an unmoderated 4Chan alternative, was deplatformed in 2019, first by Cloudflare, then by a sequence of small cloud providers (8Chan never used AWS/Azure/Google), (Alibaba Cloud, Zare UK, Tucows). Their domain registrar, Epik, was then itself deplatformed by Voxility.

Parler, like Gab, was a Twitter alternative, but where Gab primarily appealed to deplatformed alt-righters (but again, was and is a social media site), Parler had a much more normie MAGA userbase. Parler was deplatformed in 2021.

Parler was first banned from Google and Apple's app stores (combined, near monopoly on mobile), then its cloud provider (AWS), then payment processors (Stripe, American express), and SaaS providers (Slack, Okta, and Zendesk).

Parler tried to get a temporary restraining order on antitrust grounds (alleging that Twitter was conspiring with Amazon to crush a competitor), but was rejected by federal judge Barbara Rothstein.

This effectively destroyed the site; Parler was able to restore service eventually but lost 96% of its user base.

At this point, deplatforming was so normalized that Kiwi Farms, a random small forum, was deplatformed in 2022 for hosting threats against trans streamer Keffals. First by Cloudflare, then by a smaller anti-DDoS site (DDoS Guard).

2/?

Replies:

>>12589

The biggest escalation in the Kiwi Farms (again, random forum, not an important or major site) deplatforming, was that for the first time a Tier 1 internet service provider (ISP), Hurricane Electric, joined the deplatforming.

In several of these cases (Daily Stormer, Gab, Kiwi Farms), the sites were/are run by very technically skilled individuals who could replace the deplatformed services themselves (eg Kiwi Farms' Joshua Moon built his own CloudFlare alternative), but their reach was still gone.

(And of course, "you need to be personally capable of replacing the entire Internet and SaaS tech stack by yourself to operate" will kill almost all sites).

Even the "domain registar of last resort," Epik, which just provided domain registration to other sites, was itself destroyed when Anonymous hackers hacked it in 2021 and leaked 15M email addresses, leading the owner to sell it and the new owners to cease providing services.

First, Neo-Nazi websites got deplatformed, then social media sites with heavily alt-right userbases, then unmoderated image boards, then sites catering to normie MAGA, then random forums. This worked to disarm opposition; no Senator was going to stand up for the Daily Stormer.

A common retort to people complaining of censorship is "make your own [Facebook/Reddit/YouTube etc]". But if you do that, can you also replace domain registration, payment processing, cloud hosting, DDoS protection, app store access, SaaS services, and even your ISP by yourself?

Some troglodytes will say mass purges of websites from the Internet by PRIVATE infrastructure providers are OK. These people are natural slaves with zero appreciation for freedom of speech/expression/association who love the idea of a loophole around the First Amendment.

------------------------------------------------------------------------------------------------

Some sources/links:

https://en.wikipedia.org/wiki/Epik

https://eff.org/deeplinks/2021/01/beyond-platforms-private-censorship-parler-and-stack

https://cato.org/blog/brief-history-deep-deplatforming

https://eff.org/deeplinks/2023/08/isps-should-not-police-online-speech-no-matter-how-awful-it

https://gnet-research.org/2025/12/23/cutting-a-hydras-head-infrastructure-level-content-moderation-and-the-case-of-kiwi-farms/

https://knowyourmeme.com/news/cloudflare-blocks-kiwi-farms-which-is-now-out-of-lifelines-and-potentially-dead-for-good

https://nbcnews.com/tech/internet/cloudflare-kiwi-farms-keffals-anti-trans-rcna44834

https://techcrunch.com/2021/01/21/judge-denies-parlers-bid-to-make-amazon-restore-service/

https://cnn.com/2021/01/13/tech/parler-violent-groups-deplatformed

https://npr.org/2021/01/09/955329265/amazon-and-apple-drop-parler

https://blog.cloudflare.com/terminating-service-for-8chan/

https://nbcnews.com/tech/tech-news/network-provider-cloudflare-drop-8chan-after-el-paso-shooting-n1039151

https://cnbc.com/2017/08/17/cloudflare-ceo-says-removing-the-daily-stormer-is-slippery-slope.html

https://newsweek.com/daily-stormer-andrew-anglin-charlottesville-657468

https://techcrunch.com/2017/08/15/after-charlottesville-more-web-service-providers-ditch-the-daily-stormer-for-tos-violations/

https://ashishb.net/tech/cablegate-and-the-aftermath-a-few-observations/

------------------------------------------------------------------------------------------------

Cannot Post Reply

IP rate limit (spam mode) The site is currently experiencing elevated spam activity. Rate limits have been temporarily tightened. Please try again later.

You are posting anonymously. Rate limits for anonymous posters are lower than registered users.

Consider creating an account or logging in for higher posting limits.